Gambling at a slot machine or lottery games is a classic example of a variable ratio reinforcement schedule 5. Gambling rewards unpredictably. Each winning requires a different number of lever pulls. Playing slot machines in a casino is on a schedule, with lots of response options. Each type of slot machine operates on a schedule of reinforcement, and you can play any of the machines. You are at liberty to switch from one to another any time.

Reinforcement Schedule Slot Machines Games

Schedules of reinforcement can affect the results of operant conditioning, which is frequently used in everyday life such as in the classroom and in parenting. Let’s examine the common types of schedule and their applications.

Table of Contents

Schedules Of Reinforcement

Operant conditioning is the procedure of learning through association to increase or decrease voluntary behavior using reinforcement or punishment.

Schedules of reinforcement are the rules that control the timing and frequency of reinforcer delivery to increase the likelihood a target behavior will happen again, strengthen or continue.

A schedule of reinforcement is a contingency schedule. The reinforcers are only applied when the target behavior has occurred, and therefore, the reinforcement is contingent on the desired behavior1.

There are two main categories of schedules: intermittent and non-intermittent.

Non-intermittent schedules apply reinforcement, or no reinforcement at all, after each correct response while intermittent schedules apply reinforcers after some, but not all, correct responses.

Non-intermittent Schedules of Reinforcement

Two types of non-intermittent schedules are Continuous Reinforcement Schedule and Extinction.

Continuous Reinforcement

A continuous reinforcement schedule (CRF) presents the reinforcer after every performance of the desired behavior. This schedule reinforces target behavior every single time it occurs, and is the quickest in teaching a new behavior.

Continuous Reinforcement Examples

e.g. Continuous schedules of reinforcement are often used in animal training. The trainer rewards the dog to teach it new tricks. When the dog does a new trick correctly, its behavior is reinforced every time by a treat (positive reinforcement).

e.g. A continuous schedule also works well with very young children teaching them simple behaviors such as potty training. Toddlers are given candies whenever they use the potty. Their behavior is reinforced every time they succeed and receive rewards.

Partial Schedules of Reinforcement (Intermittent)

Once a new behavior is learned, trainers often turn to another type of schedule – partial or intermittent reinforcement schedule – to strengthen the new behavior.

A partial or intermittent reinforcement schedule rewards desired behaviors occasionally, but not every single time.

Behavior intermittently reinforced by a partial schedule is usually stronger. It is more resistant to extinction (more on this later). Therefore, after a new behavior is learned using a continuous schedule, an intermittent schedule is often applied to maintain or strengthen it.

Many different types of intermittent schedules are possible. The four major types of intermittent schedules commonly used are based on two different dimensions – time elapsed (interval) or the number of responses made (ratio). Each dimension can be categorized into either fixed or variable.

The four resulting intermittent reinforcement schedules are:

- Fixed interval schedule (FI)

- Fixed ratio schedule (FR)

- Variable interval schedule (VI)

- Variable ratio schedule (VR)

Fixed Interval Schedule

Interval schedules reinforce targeted behavior after a certain amount of time has passed since the previous reinforcement.

A fixed interval schedule delivers a reward when a set amount of time has elapsed. This schedule usually trains subjects, person, animal or organism, to time the interval, slow down the response rate right after a reinforcement and then quickly increase towards the end of the interval.

A “scalloping” pattern of break-run behavior is the characteristic of this type of reinforcement schedule. The subject pauses every time after the reinforcement is delivered and then behavior occurs at a faster rate as the next reinforcement approaches2.

Fixed Interval Example

College students studying for final exams is an example of the Fixed Interval schedule.

Most universities schedule fixed interval in between final exams.

Many students whose grades depend entirely on the exam performance don’t study much at the beginning of the semester, but they cram when it’s almost exam time.

Here, studying is the targeted behavior and the exam result is the reinforcement given after the final exam at the end of the semester.

Because an exam only occurs at fixed intervals, usually at the end of a semester, many students do not pay attention to studying during the semester until the exam time comes.

Variable Interval Schedule (VI)

A variable interval schedule delivers the reinforcer after a variable amount of time interval has passed since the previous reinforcement.

This schedule usually generates a steady rate of performance due to the uncertainty about the time of the next reward and is thought to be habit-forming3.

Variable Interval Example

Students whose grades depend on the performance of pop quizzes throughout the semester study regularly instead of cramming at the end.

Students know the teacher will give pop quizzes throughout the year, but they cannot determine when it occurs.

Without knowing the specific schedule, the student studies regularly throughout the entire time instead of postponing studying until the last minute.

Variable interval schedules are more effective than fixed interval schedules of reinforcement in teaching and reinforcing behavior that needs to be performed at a steady rate4.

Fixed Ratio Schedule (FR)

A fixed ratio schedule delivers reinforcement after a certain number of responses are delivered.

Fixed ratio schedules produce high rates of response until a reward is received, which is then followed by a pause in the behavior.

Fixed Ratio Example

A toymaker produces toys and the store only buys toys in batches of 5. When the maker produces toys at a high rate, he makes more money.

In this case, toys are only required when all five have been made. The toy-making is rewarded and reinforced when five are delivered.

People who follow such a fixed ratio schedule usually take a break after they are rewarded and then the cycle of fast-production begins again.

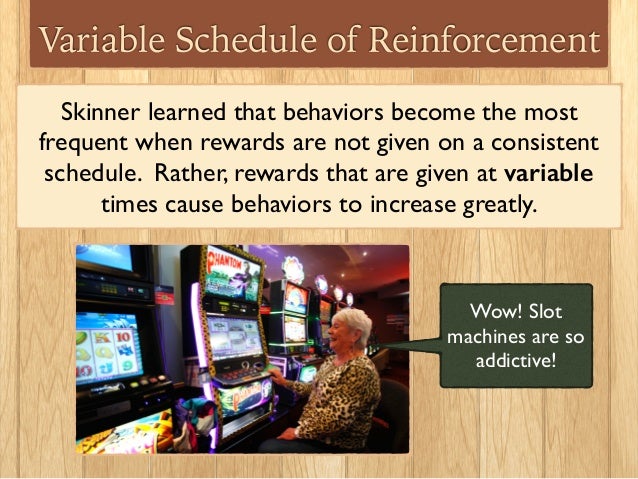

Variable Ratio Schedule (VR)

Variable ratio schedules deliver reinforcement after a variable number of responses are made.

This schedule produces high and steady response rates.

Variable Ratio Example

Gambling at a slot machine or lottery games is a classic example of a variable ratio reinforcement schedule5.

Gambling rewards unpredictably. Each winning requires a different number of lever pulls. Gamblers keep pulling the lever many times in hopes of winning. Therefore, for some people, gambling is not only habit-forming but is also very addictive and hard to stop6.

Extinction

An extinction schedule (Ext) is a special type of non-intermittent reinforcement schedule, in which the reinforcer is discontinued leading to a progressive decline in the occurrence of the previously reinforced response.

How fast complete extinction happens depends partially on the reinforcement schedules used in the initial learning process.

Among the different types of reinforcement schedules, the variable-ratio schedule (VR) is the most resistant to extinction whereas the continuous schedule is the least7.

Schedules of Reinforcement in Parenting

Many parents use various types of reinforcement to teach new behavior, strengthen desired behavior or reduce undesired behavior.

A continuous schedule of reinforcement is often the best in teaching a new behavior. Once the response has been learned, intermittent reinforcement can be used to strengthen the learning.

Reinforcement Schedules Example

Let’s go back to the potty-training example.

When parents first introduce the concept of potty training, they may give the toddler a candy whenever they use the potty successfully. That is a continuous schedule.

Are Indian Casino Slots Fixed

After the child has been using the potty consistently for a few days, the parents would transition to only reward the behavior intermittently using variable reinforcement schedules.

Sometimes, parents may unknowingly reinforce undesired behavior.

Because such reinforcement is unintended, it is often delivered inconsistently. The inconsistency serves as a type of variable reinforcement schedule, leading to a learned behavior that is hard to stop even after the parents have stopped applying the reinforcement.

Variable Ratio Example in Parenting

When a toddler throws a tantrum in the store, parents usually refuse to give in. But once in a while, if they’re tired or in a hurry, they may decide to buy the candy, believing they will do it just that one time.

But from the child’s perspective, such concession is a reinforcer that encourages tantrum-throwing. Because the reinforcement (candy buying) is delivered at a variable schedule, the toddler ends up throwing fit regularly for the next give-in.

This is one reason why consistency is important in disciplining children.

Related: Discipline And Punishment

Reinforcement Schedule Slot Machines For Sale

References

- Case DA, Fantino E. THE DELAY-REDUCTION HYPOTHESIS OF CONDITIONED REINFORCEMENT AND PUNISHMENT: OBSERVING BEHAVIOR. Journal of the Experimental Analysis of Behavior. Published online January 1981:93-108. doi:10.1901/jeab.1981.35-93

- Dews PB. Studies on responding under fixed-interval schedules of reinforcement: II. The scalloped pattern of the cumulative record. J Exp Anal Behav. Published online January 1978:67-75. doi:10.1901/jeab.1978.29-67

- DeRusso AL. Instrumental uncertainty as a determinant of behavior under interval schedules of reinforcement. Front Integr Neurosci. Published online 2010. doi:10.3389/fnint.2010.00017

- Schoenfeld W, Cumming W, Hearst E. ON THE CLASSIFICATION OF REINFORCEMENT SCHEDULES. Proc Natl Acad Sci U S A. 1956;42(8):563-570. https://www.ncbi.nlm.nih.gov/pubmed/16589906

- Dixon MR, Hayes LJ, Aban IB. Examining the Roles of Rule Following, Reinforcement, and Preexperimental Histories on Risk-Taking Behavior. Psychol Rec. Published online October 2000:687-704. doi:10.1007/bf03395378

- Redish AD, Jensen S, Johnson A, Kurth-Nelson Z. Reconciling reinforcement learning models with behavioral extinction and renewal: Implications for addiction, relapse, and problem gambling. Psychological Review. Published online July 2007:784-805. doi:10.1037/0033-295x.114.3.784

- Azrin NH, Lindsley OR. The reinforcement of cooperation between children. The Journal of Abnormal and Social Psychology. Published online 1956:100-102. doi:10.1037/h0042490

Positive reinforcement, using food rewards to increase the likelihood a dog will repeat a desirable behavior, is universally regarded as the most reliable method for teaching commands. While the basic concepts of rewardbased training are easy to understand, people sometimes inadvertently inhibit progress by using too many—or too few —treats.

Let’s say you’re in Las Vegas playing a slot machine, but every time you deposit a quarter and pull the arm, you get your one quarter in return. This wouldn’t keep your attention for long, and you’d probably opt for a different machine.

Now, what if you started feeding your hard-earned quarters into the next machine, but for hours on end got none back? Chances are you’d become equally frustrated and end your short gambling career.

Applied to dog training, both of these extremes—continuous reinforcement or none at all—can lead to lower command compliance.

GET THE BARK IN YOUR INBOX!

Sign up for our newsletter and stay in the know.

“My dog will only sit if I have a treat.” Over the years, I have heard this refrain many times, and it almost always indicates that the dog was rewarded with treats for sitting on cue too often and for too long. Essentially, the dog had learned two things had to be true for him to comply: the sit cue plus a treat. If either were not true, he’d find something more interesting to do.

When initially teaching a new command, “Continuous Reinforcement”—CR in the geeky learning-theory world— is the most effective approach. For instance, when first teaching a puppy to sit, rewarding each successful completion (or “trial”) makes sense because your focus is on clearly pairing the verbal cue and hand gesture with the behavior: put the quarter in (your puppy sits on cue) and the reward appears (treat!).

But acting as your puppy’s loose slot machine for too long causes him to stop working so hard. Why bother sitting quickly, or at all, when a treat invariably appears? CR for too long also causes the dog to become dependent on the food reward: he will refuse to work unless food is presented. Before you get to that point—usually within a few days of teaching a new cue —it’s time to move to a less predictable reinforcement schedule.

Back to the gambling analogy. Once you’re sure your dog has a grasp on what you’re teaching him, it’s time to become a fair and honest slot machine, dispensing small food rewards less frequently for successful trials. (This is also a good time to find soft treats that won’t easily crumble to bits, and to always have a few hidden in your pocket.)

The psychology behind slots— enticing folks to pump coins into machines for hours on end—is that the probability of winning remains constant, even though the number of plays it takes to recoup your money, or better yet, hit the jackpot, changes. The unpredictability makes doing the same mundane activity, over and over, interesting and exciting. You can take advantage of this same psychology to train your dog faster.

Do Casinos Control Payouts

When teaching your dog a new command, once you’ve determined that he knows what you’re expecting from him, begin randomly rewarding successful trials using “Variable Ratio” (VR) reinforcement. Start with a low ratio, rewarding roughly one out of every three trials, then increase the ratio over the course of several training sessions.

Slots Simulator

For example, when teaching your puppy to sit, provide a small treat for (successful) trials 2, 7, 9, 15, 18, 19, 20, 23 and 25. Notice that during 25 trials, sometimes he gets three rewards in a row, but sometimes, there’s a longer lag between treats. The idea is to keep him guessing—and working!

Over the course of twice-daily training sessions (two to five minutes each), increase the ratio until he is rewarded for roughly one out of every ten successful trials. The behavior should become a happy habit by then, although, to keep commands fresh, continue to occasionally reward your dog for life. In other words, don’t become the slot machine that never pays a jackpot!

There are other types of reinforcement schedules too involved for our purposes here, but one to take advantage of is “Differential Reinforcement of Excellent Behavior” or DRE. This is just a fancy way of saying “better performance earns bigger rewards.” Once you’ve worked through Continuous Reinforcement (treating every time to teach the command) and Variable Ratio (treating randomly to hone the behavior), you can polish the command by handsomely rewarding only the best trials.

Let’s think about DRE in terms of teaching recalls. Once your dog is largely responding to your “come” command, and you’ve worked through Variable Ratio reinforcement—by sometimes treating and sometimes not— start rewarding with higher-value treats, or more of what you have, only when your dog immediately and enthusiastically answers your call. If he stops and smells the roses (or whatever that was) en route, no reward is given.

Slot Machines Reinforcement Schedule

Advancing through these levels is not rigid, and you may combine aspects of more than one as you progress. Be ready to back up a step if you’ve moved too fast—your dog will let you know!